A Brief Guide to Convolutional Neural Networks (CNNs)

Plus: Weekly links about small and open-source models.

Most people start learning about neural networks with a toy problem: building a three-layer perceptron that classifies handwritten digits from the MNIST dataset. This problem has become so common that it’s usually the first thing taught in online YouTube tutorials and textbooks. But the accuracy of such a network stalls out because it treats every pixel as independent, ignoring the structure of an image. Using a convolutional neural network, or CNN, gives much higher accuracies when classifying handwritten digits. But it’s not so easy to understand why!

A CNN is a type of neural network designed to recognize patterns in data — such as images — by applying small filters, or convolutions, across local regions instead of connecting every input to every neuron. This makes them efficient at detecting shapes, edges, and textures, which stack together to recognize more complex features.

We assembled some resources that do a great job at explaining CNNs and how they work. At the end of this post, we also round up some recent developments in small, open-source models; the first post in what will be a weekly links series.

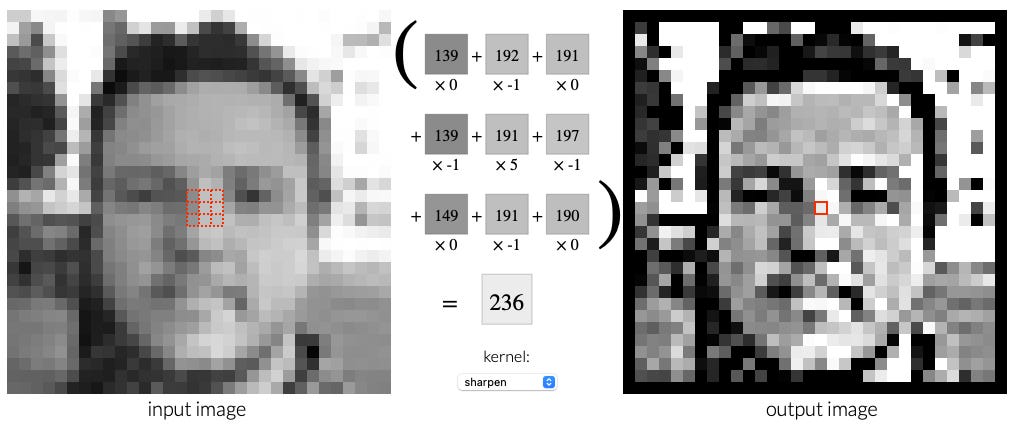

1. Setosa.io: Image Kernels, Visually Explained

We think the best way to start learning about CNNs is to see how convolutions work visually. This website does a great job at that. It explains how a kernel is just a tiny matrix, and convolution is just “multiply and sum” as it slides across an image. You will walk away knowing what convolution does, rather than solely what it is.

2. CS231n: Deep Learning for Computer Vision (Stanford)

This is the gold standard. All the lecture videos are available online, and the final assignment will challenge you “to train and apply multi-million parameter networks on real-world vision problems” of your choosing. The course opens by explaining image classification with linear classifiers, but quickly moves into CNNs (by week 5). Plus, one of the lecturers is Fei-Fei Li.

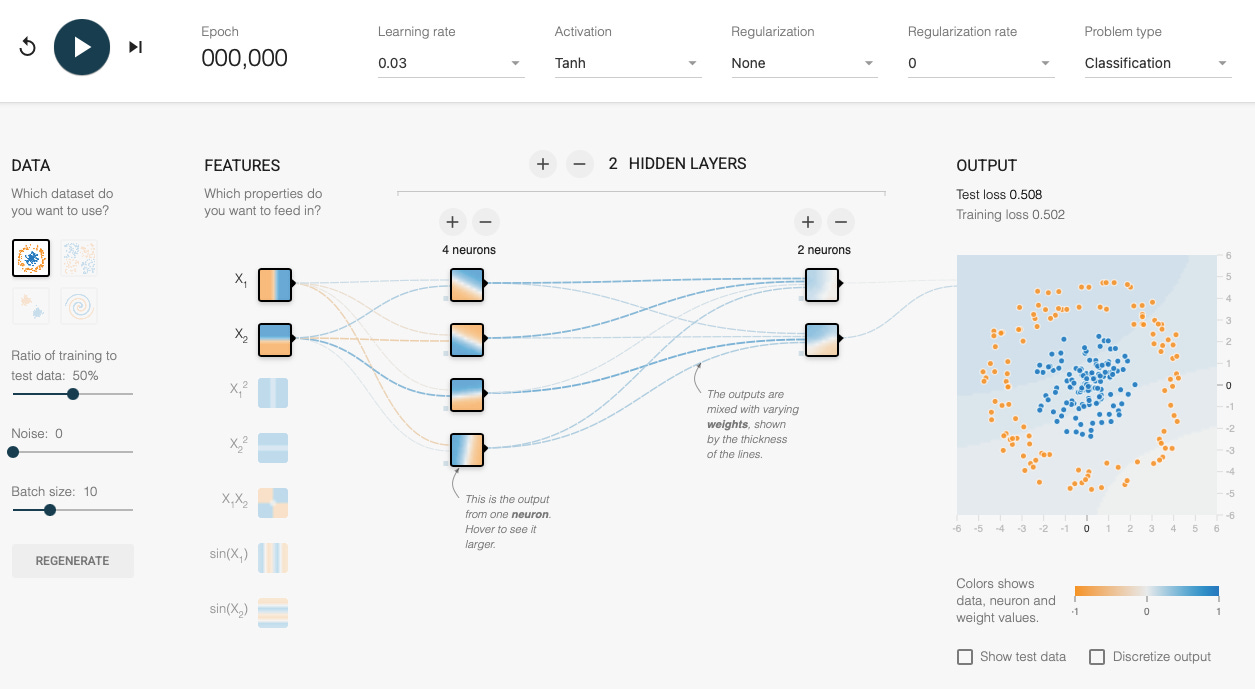

3. TensorFlow Playground

Though not strictly CNN‑focused, TensorFlow has a super friendly entry point into neural nets. You can toy with learning rate, activation functions, and regularization directly in the browser. It’s low‑stakes exploration that builds understanding of what training looks like. Once you’re comfortable with that, CNNs don’t feel quite as exotic.

4. CNN Explainer

This is an interactive explainer on CNNs where you click layers, watch kernels slide, and explore how neurons compute step by step. It doesn’t just tell you what’s happening, but shows it to you. It also runs in your browser with no installs needed.

5. Distill: Feature Visualization

Once you know what CNNs do, you might start to wonder more about how they do it. This article explains it without resorting to too much math. It shows how you can reverse‑engineer what activations “look for,” using optimization to generate what a neuron cares about. It starts from the simplest idea (push the network to activate one neuron) and then explains the pitfalls and tricks, like avoiding noise and encouraging diversity. The visuals are really beautiful.

This week in AI:

With an emphasis on small and open-sourced models.

Microsoft releases VibeVoice, an open-source text-to-speech model with just 1.5B parameters.

rbio1: A reasoning model for biology.

“Google says it dropped the energy cost of AI queries by 33x in one year.”

Agent-C, an ultra-lightweight AI agent that communicates with an OpenRouter API.

xAI open-sources Grok 2.5.

Using AI to identify sketchy science journals.

Bottlenecks in scaling AI for software engineering.

xAI released grok-code-fast-1, “a speedy and economical reasoning model that excels at agentic coding.”

Hunyuan open-sources their end-to-end Text-Video-to-Audio framework.